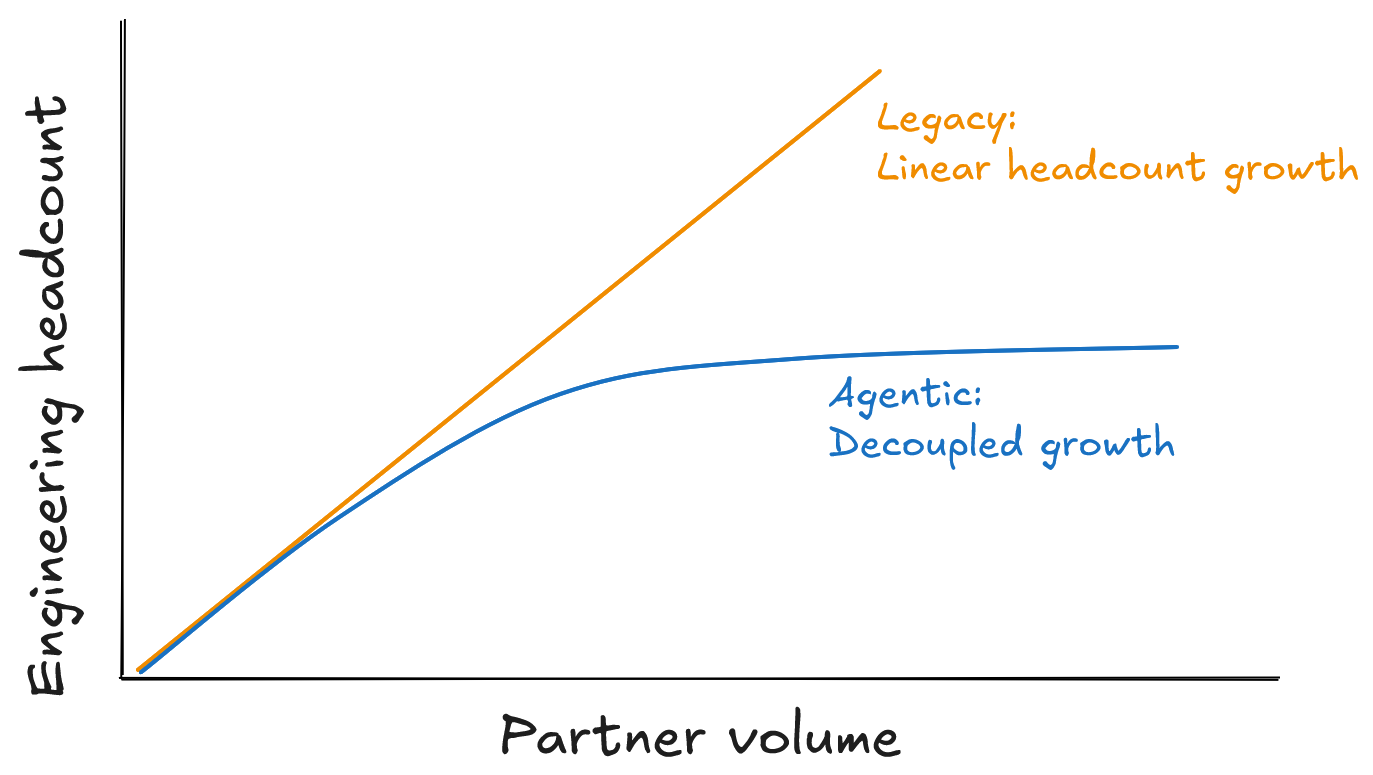

Agentic consumption: Decoupling growth from headcount

How agentic AI increases sales efficiency

Agentic AI, specifically the models released at the end of 2025, has fundamentally changed the landscape for professional services. While integration services are often billable in SaaS or necessary in B2C, they create a linear relationship between headcount and growth that results in disguising a services company as a Product one.

In this environment, we prioritise engineering resources for large partnerships to secure higher ROI, but this creates a strategic blind spot: it ignores the long tail of agile, tech-savvy partners ready to integrate and scale alongside us.

The legacy approach of proprietary data silos is no longer viable. Having the source of truth and documentation in the heads of the people performing the manual mapping creates a systemic risk. Furthermore, partners often lack the incentive to maintain these integrations, so you bear the full cost of maintenance when their systems change without notice.

This is a clear opportunity cost. When a competitor automates this mapping (and this is no longer a question of if, but when), the traditional billable model becomes a liability that slows down your time to market and leads to an inevitable loss of market share.

From strict validation to semantic interpretation

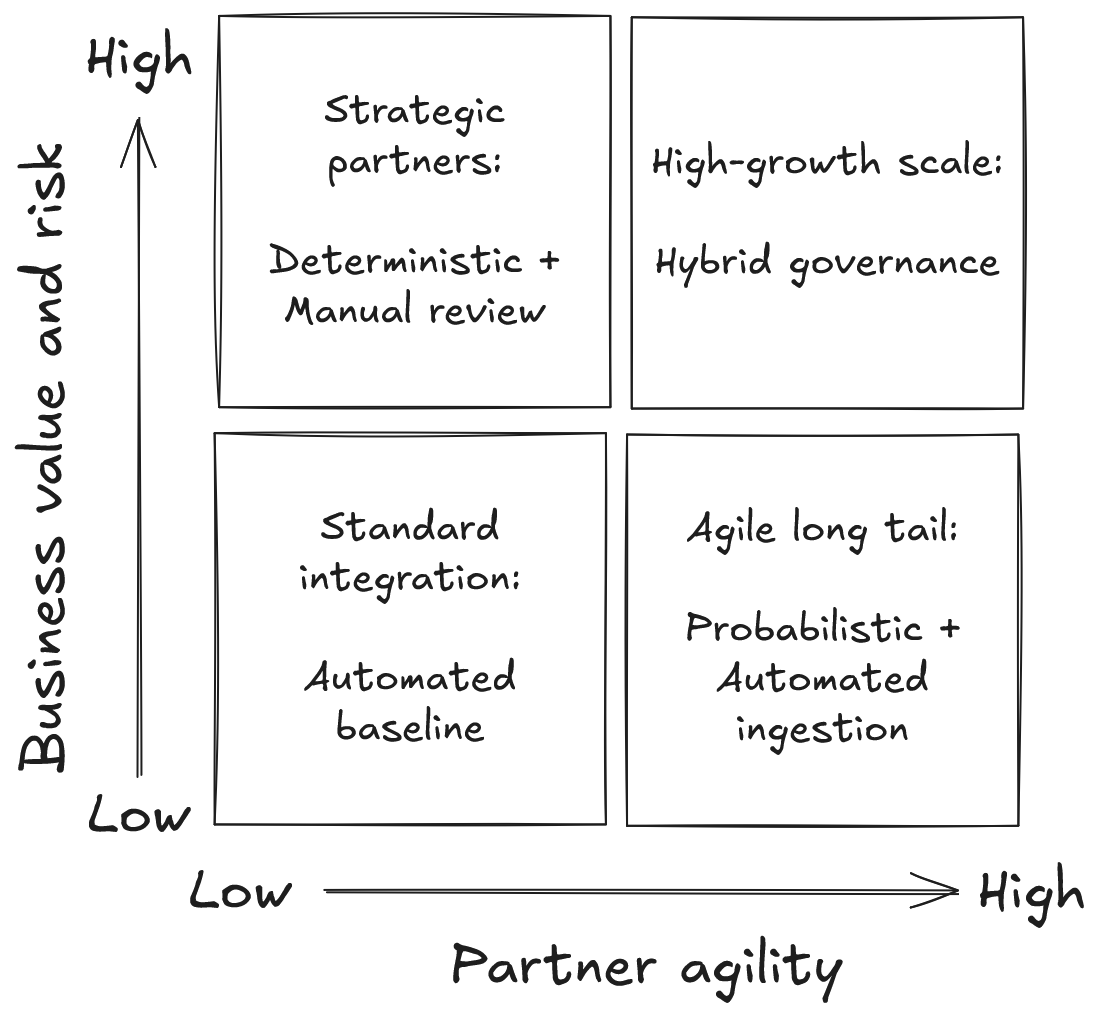

Traditional schema validation based on JSON or XML is too rigid when you face the reality of hundreds of different partner formats. While this approach works for a handful of corporate partners, it doesn’t scale to the long tail. Until very recently, the only option was constant prioritisation and hoping that engineering capacity would eventually be found for smaller integrations.

But agentic AI has disrupted the integration landscape. We must move away from the false choice between perfect data and fast onboarding. Instead, we build architectures that ingest context and intent, accepting a manageable margin of error in exchange for infinite scale.

This shift requires providing context and intent directly to agents via MCP, allowing them to understand why a field exists rather than just its data type. It also requires a deliberate trade-off: sacrificing the deterministic perfection of manual mapping for the non-deterministic scale of probabilistic ingestion. By applying variable governance (rigorous manual review for high-value contracts and automated paths for the long tail) we maintain a measurable risk profile without restricting growth.

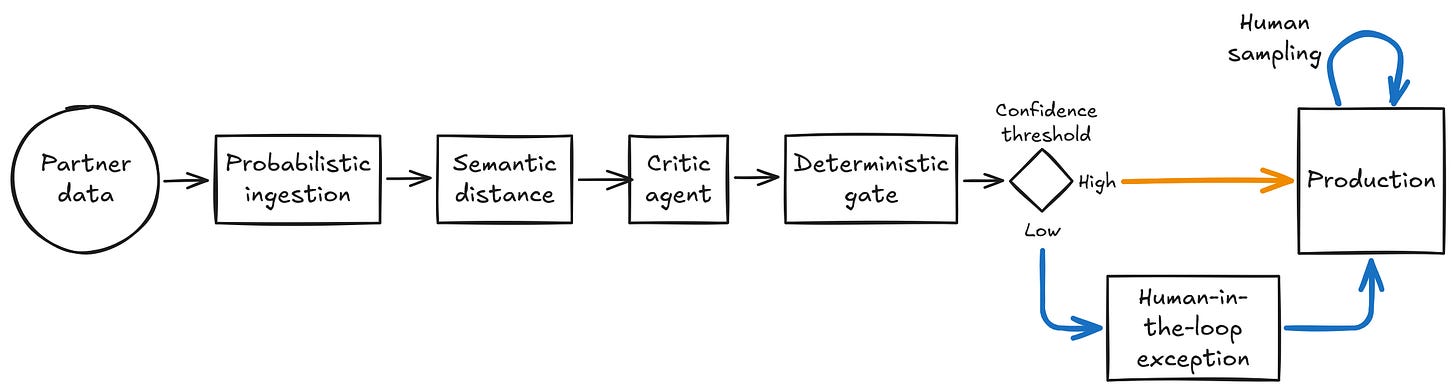

Managing risk with multi-layered validation

Many parts of an organisation, especially outside of technology, assume that testing should reduce error to zero. However, testing is only one layer in a broader strategy to reduce the risk and impact of errors. Moving to agentic consumption requires implementing additional layers of testing, specific governance for non-deterministic systems, and a new set of technical checks.

As the architecture shifts to a probabilistic model, communication and MTTR (mean time to restore) become even more critical. A clear narrative helps the wider organisation understand this new way of working by explaining the strategic reasons for the shift, the benefits of scale, and the specific resourcing plan to address inevitable issues. And since we expect to have more issues, MTTR becomes our main lever for stability. Because hallucinations will occur (similar to bugs released in traditional systems), the organisation must be ready to weigh the positive impact of moving faster against the negative impact of the occasional issues caused by an automated, non-deterministic architecture. In this environment, the speed of recovery (MTTR) often has a larger business impact than the prevention of every individual error.

Maintaining quality in a non-deterministic system requires the same domain expertise held by our current teams. We shouldn’t outsource this to a siloed AI squad, we should embed that expertise directly into the new reliability guardrails.

To move the conversation from hoping the model is right to a rigorous engineering standard, the system relies on a confidence score generated through multiple independent validation layers. We cannot trust the model’s own self-assessment, the architecture uses semantic distance to compare outputs from different models, and a dedicated critic agent to audit the results against a strict set of business constraints. These results are then passed through a final deterministic gate (a standard schema validator) to ensure the structural integrity of the output.

This multi-layered mechanism transforms non-deterministic output into a measurable risk profile. It allows the organisation to set precise thresholds: high-confidence mappings move to production with human oversight, while low-confidence results trigger a human-in-the-loop exception. This ensures human expertise is reserved for resolving complex ambiguities rather than performing repetitive, synchronous validation.

From linear costs to competitive advantage

Agentic consumption decouples headcount from partner volume, ending a cycle where small differences made code reuse impossible and created a hard growth ceiling. While a low-growth company might use these gains to reduce costs, the strategic choice is to reinvest this capacity into the reliability and tooling required to manage a non-deterministic ingestion engine. This shift moves engineering focus from delivering bespoke mappings to improving the system success rate. However, it creates a new constraint: the need for advanced observability for the reasoning integrity. The next strategic priority is building the observability stack required to audit these autonomous decisions at scale.

By removing the onboarding bottleneck, we ensure that customers pay earlier and sales efficiency increases. This shifts integration from a capacity constraint to a competitive advantage. In this new environment, engineering leadership wins not by shipping code, but by governing the systems that ship it for us.