Org design for the agentic SDLC: Machine-readable context as the primary asset

Code is now a secondary asset. How to redefine the boundaries between team roles when the agents write the syntax

Implementing an agentic SDLC accelerates delivery, but it immediately moves the bottleneck from code generation to requirement ambiguity. In the legacy SDLC, human engineers act as a translation layer, using domain knowledge to patch vague tickets before writing code. We accept that engineers will no longer type code, but autonomous agents cannot detect missing context. If a PM writes a vague requirement, the agent will flawlessly compile it into technical debt. To manage this trade-off, we must go past individual prompting and treat context engineering as a foundational architectural concern. The entire organisation must shift to define clear deterministic intent, embedding governance into machine-readable rules accessible by the agents.

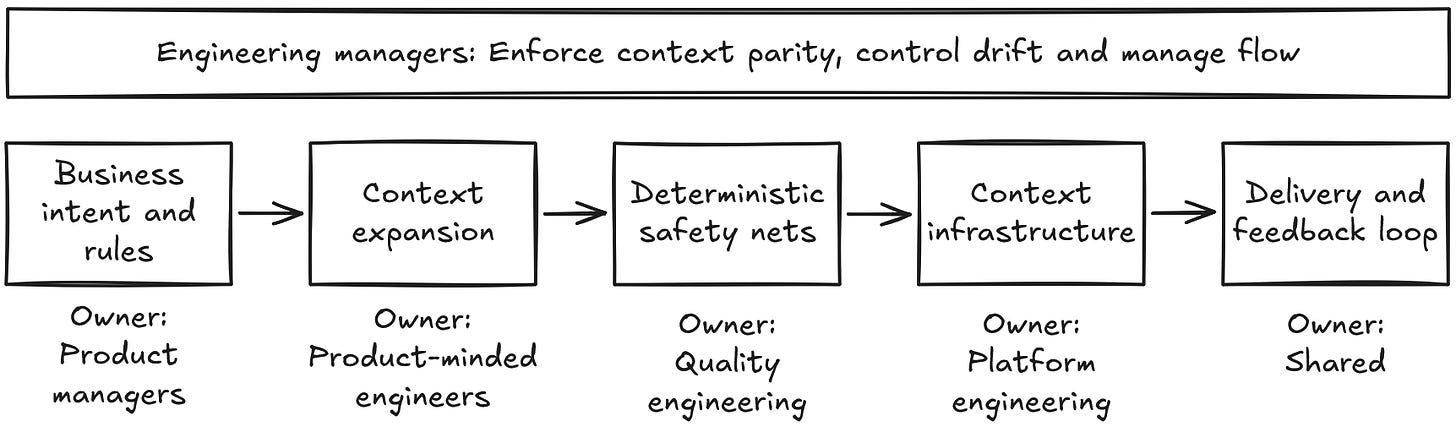

The core role changes

Shifting to an agentic SDLC changes the role not only of the engineers, but every role in and around the engineering team. Below I focus on the core roles:

Product managers: They own the core deterministic business rules and product discovery. As team velocity multiplies, PMs cannot scale to define every edge case. They must delegate the exhaustive expansion of logic (e.g. edge cases) to the engineering team. To manage the change in responsibility (Product now holds accountability for specification failures), leadership must redefine the delivery process: Product approves context assets via the standard PR process. This reduces political friction by creating a clear boundary: Product signs off on the rules, and Engineering scales the execution.

Product-minded engineers: The fundamental change is the shift from generating code to context engineering. They take the PM’s core rules and expand them into exhaustive edge cases, owning the curated shared instructions (e.g. AGENTS.md), and keeping these assets in version control alongside the code, subject to the same PR scrutiny. They also perform HITL reviews, steadily phasing out manual smoke tests.

Engineering managers: Their core mandate to deliver high-quality, secure software remains, but the execution changes drastically. EMs now ensure system flow by keeping context assets synced with the codebase, actively controlling architectural drift, and preventing context bloat.

Quality engineering: They embed governance directly into the team processes. They build the agentic harness to reduce architectural drift, enforce NFRs, and encode security policies. By implementing deterministic analysis tools directly into the delivery pipeline, they build automated safety nets that validate machine output at scale.

Platform engineering: They provide context infrastructure at scale. They supply sandboxed execution environments (e.g. restricted microVMs or containers per PR) and build the automated FinOps guardrails with default token circuit breakers that automatically kill out-of-control workflows. This infrastructure empowers EMs to track and tweak their team’s operational token budgets safely, without risking runaway platform spend.

Managing the messy middle of agentic adoption

Migrating to an agentic SDLC requires operating in a prolonged hybrid state. Teams will context-switch between agent-driven greenfield services and human-driven legacy components, often within the same sprint. Not every system can, or should, migrate to an agentic workflow.

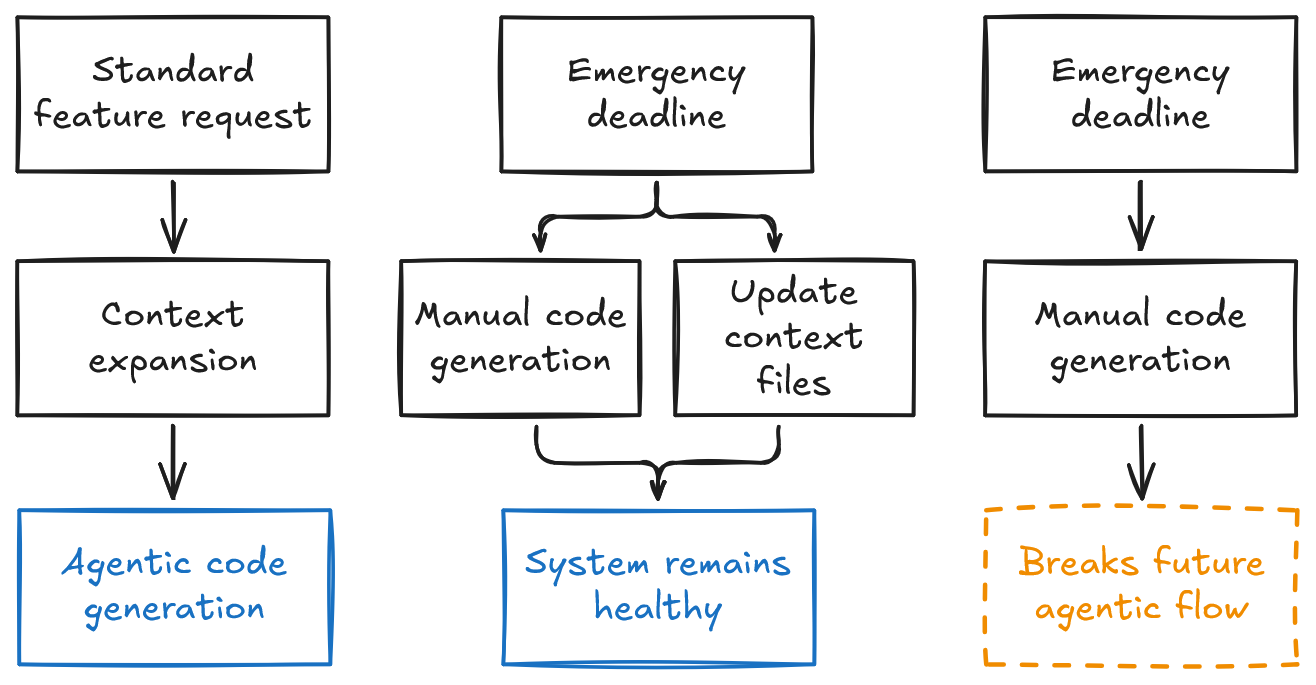

Even within agent-driven boundaries, a high-performing engineer will eventually bypass the system to manually write a feature and hit a tight deadline. Leaders should not misinterpret this as cultural pushback against the agentic transition; in a high-velocity environment, this manual override is a rational business trade-off.

To manage this, leadership must enforce context synchronisation between the code and the machine-readable context files. The process supports manual PRs during a crisis, but it requires that the engineer updates the deterministic, version-controlled assets simultaneously. If an engineer ships code without updating the context, they break the agentic system for future agents. We treat bypassing context updates exactly like breaking the deployment pipeline.

While an individual override is a rational trade-off, repeated overrides in the same domain are a system signal. It indicates the context is weak, or the agentic workflow is fundamentally misaligned with delivery pressure.

The residual systemic risks

As the agentic SDLC matures, residual systemic risks that initially were less critical start adding up. Below are two of those risks:

Loss of the mental map: As engineers write specifications instead of code, they lose the deep understanding of the codebase needed to debug critical incidents. Similarly, as manual testing is phased out, the UX intuition provided by human QA disappears. This means that during a severe outage, engineers must navigate and fix agent-generated code without the fallback of a deep mental map or manual sanity checks. This will impact MTTR unless the organisation invests heavily in agentic incident orchestration.

Context rot: Dumping raw data into large context files triggers context rot and wastes tokens unnecessarily. Even more, as business logic scales, engineers might accidentally invent a rigid, inefficient domain-specific language just to prompt agents effectively. Teams must fight these anti-patterns by building progressive context pipelines, for example starting with a lightweight index that the agent uses to retrieve only the necessary context. This is also where architecture becomes a machine-readable constraint. Leaders must enforce small domain boundaries (e.g. microservices) not just to scale human teams, but to reduce the cognitive load and limit the context window required by the AI agent.

The agentic SDLC is fundamentally an organisational design challenge, where machine-readable context is the main asset. This, however, introduces a new constraint: engineering leadership must now build the guardrails to govern the cognitive load, architectural drift, and financial cost of managing this context at scale. The organisation shifts from accelerating human output to maintaining the integrity of the instructions driving it.